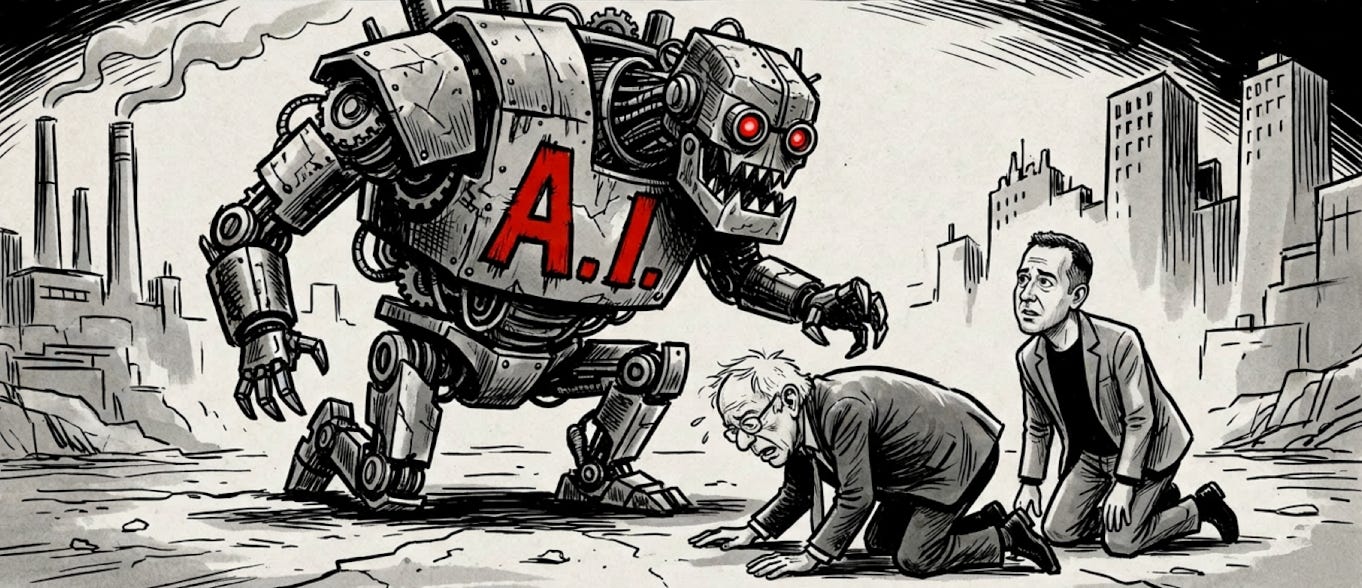

Who's afraid of AI?

Nobody should be really, except for the know-nothings and a few know-it-alls

Who’s afraid of AI?

Ironically, those who know the least about it.

And those who know the most about it!

There is a venerable tradition in American politics of glibly announcing the next super-sized threat to civilization before civilization has even had a chance to engage with what is supposed to be gravely menacing it.

Communism, fluoride in the water, rock and roll, the satanic backmasking of lyrics to popular songs, and — yes — the internet itself have all swapped turns as the rising beast of the impending apocalypse.

Each time the most strident of voices themselves sounded among those with the least firsthand knowledge of the subject.

The advent of artificial intelligence, or AI, has in no way switched off this dynamic with the exception perhaps of the velocity at which misinformation itself casually travels around the globe.

On this occasion, however, there happens to be a curious twist.

The most alarmist voices are not only those who know little about AI. They also include some of those who know the most about it.

Both parties are for different reasons scaring the bejeebies out of a perplexed public that has little to no clue about the trajectory of AI and would rather revel in fantasy role-playing than take time for reasoned inquiry and deliberation.

As for the most prominent among the know-the-leastest please, dear readers, consider the case of Bernie Sanders, the curmudgeonly “Democratic socialist” independent senator from Vermont with his Alfred E. Newmanesque “what me worry?” slant toward public spending and the national debt together with his “make the fat cats pay for all that in perpetuity” plan to enforce fiscal discipline at the national level.

Just last week Sanders published a Wall Street Journal op-ed bruiting a subtitle designed to terrify the truly most clueless that AI is “a threat to everything the American people hold dear.”

Sanders argued that the AI revolution is being boostered by billionaires like Elon Musk, Jeff Bezos, and Mark Zuckerberg, who are investing enormous sums not to improve life for working families but to further to aggrandize themselves by flaunting their own obscene wealth and political puissance.

Of course, even if Elon, Jeff, and Zuck are conspicuously conspiring to pauperize each and every one of us, it has virtually nothing to do with either the promise or perils of AI.

Sanders has spent his entire career inciting the laboring class against the asset class. He could have said the same thing about the railroad, the telephone, and even the transistor.

And let’s not forget Kelly McKinney, the former deputy commissioner at the New York City Office of Emergency Management, who authored his own jeremiad in The Hill warning us about a crushing tidal wave of job destruction that AI is poised to unleash.

McKinney’s framing mirrors his background in disaster management. He lays out a useful mental model for hurricanes with his observation that “people do not respond to vague warnings”, which hardly suffices, however, for predictions about intricate and congolmerate economic changes over many decades in which new technologies will simultaneously eliminate and generate particular kinds of jobs.

In his opinion article McKinney neglects to examine the actual evidence on AI and labor markets. He treats the most horrendous predictions as established fact.

But what do the know-nothings know when the know-everythings seem to know — well, not much it turns out?

Enter stage right Dario Amodei, the CEO of Anthropic and the most technically precocious among princely, pre-eminent poobahs when it comes to artificial intelligence. His Einsteinian comprehension of scaling laws, capability curves, and principles of market diffusion remains unsurpassed.

Yet Amodei has a (bad) habit of making public pronouncements that glissade from applause-worthy technical genius, as manifest in Anthropic’s own success, into eyebrow-raising, if not cringe-worthy, woo-wooish episodes of occult conjecture — for instance, his much publicized suggestion that AI systems may be self-conscious and sentient, or at least approaching that capability.

Anthropic has allowed its Claude models to publish “reflections” on its own Substack. Amodei is quoted warning of a “tsunami” of AI disruption and claiming that AI systems are on the verge of “reaching human-level intelligence”, or what in AI parlance is known as “the singularity”.

Amodei’s critics counter that Amodei is merely going off the rails in doing what human beings naturally do with pets, boats, cars, and power tools, namely, wallowing in anthropomorphism.

As NPR host Tanya Mosley in a rousing panel discussion with sundry AI experts asked dryly, “it’s just software, right?”

The problem is that nobody — not Amodei, not the brainiest of the cognitive psychology braintrust — actually knows what sentience is.

The distinguished philosopher David Chalmers, who coined the phrase “the hard problem of consciousness” in 1995, defined the problem as one of why and how any physical system might give rise to subjective experience in the first place. Chalmers tells us that sentience itself is an age-old conundrum that theorists in the last two millennia have spectacularly failed to resolve.

As the author of the article “Consciousness” in the Stanford Encyclopedia of Philosophy states clearly, such a question remains, not just among philosopher, wide open.

Even a complete functional description of a given system, which philosophers tell us must specify everything it is supposed to do, such as how it processes information or how it reacts to stimuli, cannot under any circumstances infer whether the system itself has any inner experience at all.

A 2024 survey in Acta Analytica by philosopher Tobias Wagner-Altendorf minced few words in profiling the real issue.

While empirical neuroscience has made major strides, according to the survey, in mapping the correlates of conscious states, philosophical progress concerning the “hard problem” has been “much less pronounced.”

Chalmers himself was overly optimistic, Wagner-Altendorf insists, in predicting that advances in scientific theory itself would eventually crack the code of consciousness.

Cognitive theory as a whole sports more than three hundred competing prototypes that purport to explain consciousness, none of which commands even a remote consensus.

We do not know, in point of fact, what consciousness is, or where it comes from. We do not know the necessary and sufficient conditions for it to exist at all.

Given this mind-boggling uncertainty about what sentience is even in the biological forms with we are most familiar — that is, ourselves — the confidence with which AI developers speak of “machine consciousness” is to be taken with a humungous grain of salt.

A review paper in PhilPapers by multiple authors notes that “conversations on AI sentience can risk ambiguity without distinguishing the sense of ‘sentience’ involved,” and that “many believe that questions involving AI consciousness cannot be fully understood, much less answered, except by considering particular theories of consciousness.”

Metaphysics aside, we need to investigate the even more sinister AI boogabear that the tech titans are touting about smart machines banishing smart human beings from the labor market. The picture, in fact, happens to be far more convoluted than the catastrophists or the pollyannas are willing to admit.

A benchmark MIT study published last week traces a much needed factual baseline with rare precision.

According to the report, present AI systems are capable already of taking over tasks associated with roughly 11.7 percent of the U.S. labor market. At the same time, that affects approximately 151 million workers with about $1.2 trillion in wages — a hefty and sizable number.

Nonetheless, the research also finds that we are several years away from AI achieving high-value success across task categories, which implies that workers may have more time to adapt than the most disturbing headlines entail.

The research also shows that companies adopting AI see substantial gains in revenue, profits, and employment with that growth translating into net job creation. Ergo, contra Sanders, it is apparent that AI is not merely a wealth extraction mechanism.

Much like prior technological revolutions, AI appears to be disrupting some sectors of the economy while enhancing others, eliminating certain categories of work while creating demand for new forms of it.

A Brookings Institution report published this week further sharpens the picture.

The Brookings data identifies over 15 million workers without four-year degrees in jobs that are highly exposed to AI. Akmost 11 million of these jobs are in “Gateway” occupations, which that have historically enabled workers to acquire new skills and transition to higher-wage positions.

According to Brookings, AI threatens to erode the pathways workers use to climb from low to higher-wage work, nearly half of which are highly exposed to AI disruption. The magnitude of the threat demands targeted intervention in specific labor-market pathways, investment in reskilling and credential portability, and careful monitoring of how AI adoption alters hiring patterns at ground level.

But it does not militate toward Sanders’ proposed “robot tax” on all corporations that replace workers with machines, which would penalize the very productivity gains which MIT and Brookings show lead to omnibus employment growth.

Research by David Autor and his colleagues using census data demonstrates that 60 percent of workers in 2022 were employed in occupations that did not exist in 1940. Furthermore, the employment growth rate of 85 percent over the last 80 years was propelled by technological innovation.

Each technology innovation has over time been responsible for a net expansion of both economic prosperity and freedom from monotonous and exploitive work conditions. There is no reason to believe AI will be all that different, unless bad policy makes makes bad prophecy self-fulfilling.

Sanders, Amodei, and their ilk, unfortunately, have succeeded only in amplifying public fear in ways that serve their narrow ideological prejudices while jamming the thought waves for serious policy conversation.

Similarly, ill-informed popular mythologies propogated by self-serving public figures who are out of their academic league will discourage the very investments in safety research and equitable deployment that could make the transition to AI largely beneficial for the average working Joe and Jane.

Inevitably, these blowhards will culture the soil for less scrupulous actors, both foreign and domestic, to develop and deploy dangerous modalities of AI without the democratic accountability either either Sanders or Amodei demand.

Who’s afraid of AI?

None of us should really be afraid, even though we should remain ever vigilant — and naturally cautious.

We ought to remember the “famous last words” of Thomas Watson, CEO and founder of IBM, who said with all conviction: ““I think there is a world market for maybe five computers.”

Or those of astronomer Clifford Stoll in a 1995 interview when he boldly declared that “the internet is just a fad”.

Even Amodei, one of the godfathers of AI himself, may have to eat one day his own (famous last) words.